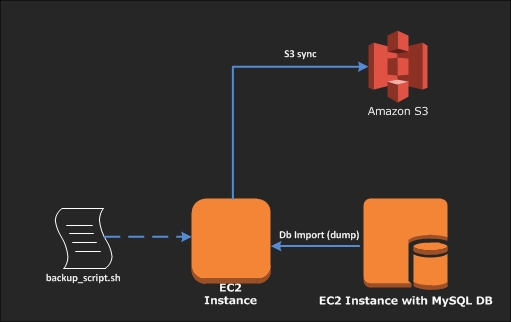

If you have a database on an EC2 instance, the question that comes up frequently is “how do I backup my database and where?”. The easiest option is to backup to Amazon’s S3 storage. This post shows you how to achive an automated database backup to S3 using a simple shell scripts that can be run on the database server.

Here’s a checklist of things that need to be in place for this to work:

- An IAM user with permissions to upload data into the S3 bucket

- The IAM user’s Access Key and Secret Key

- awscli (AWS Command line tool) installed and configured on the server

- An S3 bucket created to store the dB backups

1. Install awscli

Install awscli dependencies (if they do not already exist)

Run pip –version to see if your version of Linux already includes Python and pip

$ pip --version

If you don’t have pip, install pip as follows:

$ curl -O https://bootstrap.pypa.io/get-pip.py $ sudo python get-pip.py

Verify pip is successfuly installed:

$ pip --version pip 9.0.3 from /usr/local/lib/python2.7/dist-packages (python 2.7)

For detailed installation and troubleshooting go here: https://pip.pypa.io/en/stable/installing/

Installing the AWS CLI with Pip

Now use pip to install the AWS CLI:

$ pip install awscli --upgrade

Verify that the AWS CLI installed correctly.

$ aws --version aws-cli/1.14.63 Python/2.7.12 Linux/4.4.0-1049-aws botocore/1.9.16

Configuring AWS CLI

The aws configure command is the fastest way to set up your AWS CLI installation.

$ aws configure AWS Access Key ID [None]: AKIAIOSFODNN7EXAMPLE AWS Secret Access Key [None]: wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY Default region name [None]: us-east-1 Default output format [None]: json

For detailed AWS CLI configuration and installation options go here: https://docs.aws.amazon.com/cli/latest/userguide/cli-chap-getting-started.html

Now that we have all the dependencies set up, lets go ahead and create the bash script to back up our database:

2. Create the MySQL-S3-Backup Script

$ sudo mkdir -p /opt/scripts

$ cd /opt/scripts

$ sudo vim mysql_s3_backup.sh

#!/bin/bash

echo "$(tput setaf 3)Starting backup script at $TSTAMP" >> "$LOG_ROOT"

#create a few variables to contain the Database_credentials.

#Database credentials

USER="db_user_name"

PASSWORD="Your_dbPassw0rd"

HOST="db_host_name"

DB_NAME="database_name"

#Default backup & log file locations

BACKUPROOT="/backup_folder/db_dumps" # location where you want to temporarily store the database dumps locally

TSTAMP=$(date +"%d-%b-%Y")

S3BUCKET="s3://db-dump-bucket-name"

#logging

LOG_ROOT="/backup_folder/logs/db_dump.log" # Define where you want the script to save its logs

#Dump of Mysql Database into S3

mysqldump --single-transaction -h $HOST -u $USER -p$PASSWORD $DB_NAME > $BACKUPROOT/$DB_NAME-$TSTAMP.sql

echo "$(tput setaf 3)Finished backup of database at $TSTAMP" >> "$LOG_ROOT"

# Set the retention period and delete old backups. In this case, it is set to 15 days

# You can change the retention period by increasing/decreasubg -mtime from 15 to a value of your choice

find $BACKUPROOT/* -mtime +15 -exec rm {} \;

# Sync to S3

echo "$(tput setaf 3)Sending it in S3 Bucket at $TSTAMP" >> "$LOG_ROOT"

# This will sync the database backups that exist in the $BACKUPROOT folder (based on your retention period)

# and the S3 bucket, deleting older backups from S3 as they are removed from the local backup folder

aws s3 sync $BACKUPROOT $S3BUCKET --delete

echo "$(tput setaf 2)Moved the backup file from local to S3 bucket at $TSTAMP" >> "$LOG_ROOT"

echo "$(tput setaf 3)Script executed successfully at $TSTAMP" >> "$LOG_ROOT"

### Save it and exit (:wq)

3. Make it Executable and Test it

$ sudo chmod +x mysql_s3_backup.sh

Run the script to make sure it’s all good

$ sudo ./mysql_s3_backup.sh

If the script executes without errors, we can move on to setting up the cron job to run the script on a specified schedule.

4. Schedule it with Cron

Assuming the script is saved in /opt/scripts, lets set up a cron job to run a daily backup of the database:

$ sudo crontab -e ## Add the following line to crontab @daily /opt/scripts/mysql_s3_backup.sh

Thats it! You now have a simple system to automatically backup the MySQL database to an AWS S3 bucket.